Alex Chen

Age 26 · Commuter and passive listener

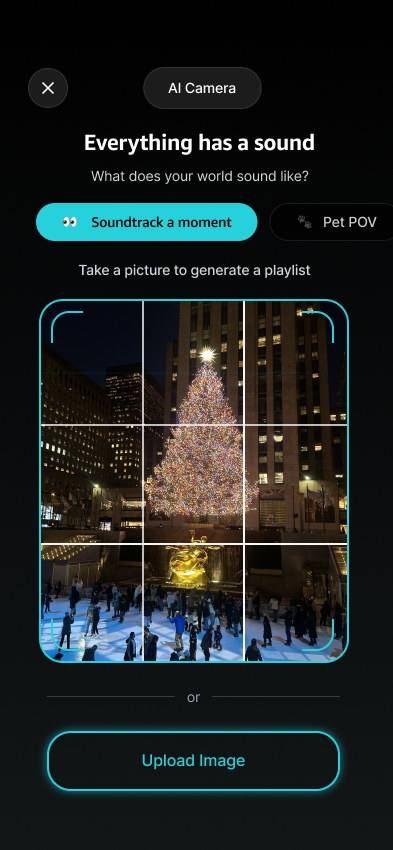

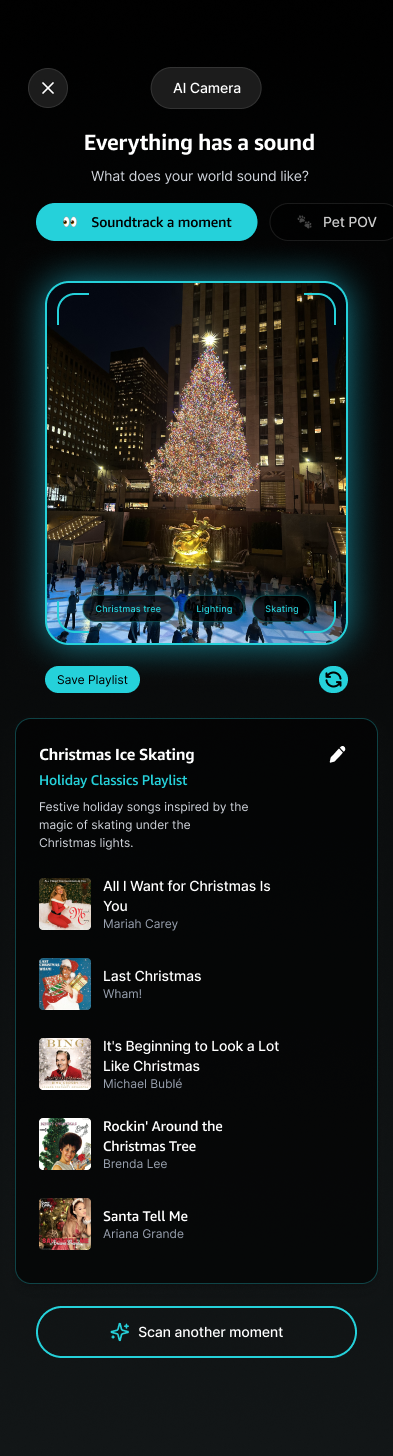

Scenario

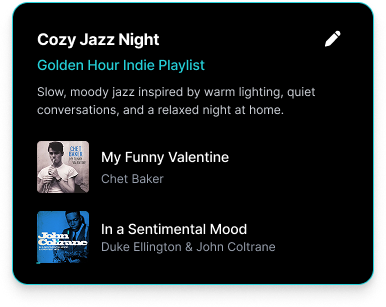

Commuting home after a long day, standing on a crowded train with headphones on. Wants to unwind but does not want to scroll through playlists to find the right vibe.

Need

A playlist that matches the evening wind-down mood instantly without any search or input effort.